Disclaimer: This is work in progress intended to consolidate information from various sources and may deviate from them. Please consult the sources for the exact content!

Last updated: 31-Mar-2024

[Microsoft Fabric] Polaris

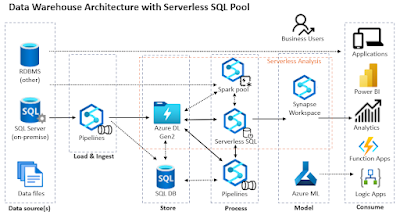

- {def} cloud-native analytical query engine over the data lake that follows a stateless micro-service architecture and is designed to execute queries in a scalable, dynamic and fault-tolerant way [1], [2]

- the engine behind the serverless SQL pool [1] and Microsoft Fabric [2]

- petabyte-scale execution [1]

- highly-available micro-service architecture

- data and query processing is packaged into units (aka tasks) [1]

- can be readily moved across compute nodes and re-started at the task level [1]

- can run directly over data in HDFS and in managed transactional stores [1]

- [Azure Synapse] designed initially to execute read-only queries [1]

- ⇐ the architecture behind serverless SQL pool

- uses a completely new scale-out framework based on a distributed SQL Server query engine [1]

- fully compatible with T-SQL

- leverages SQL Server single-node runtime and QO [1]

- [Microsoft Fabric] extended with a complete transaction manager that executes general CRUD transactions [2]

- incl. updates, deletes and bulk loads [2]

- based on [delta tables] and [delta lake]

- the delta lake supports currently only transactions within one table [4]

- ⇐ the architecture behind lakehouses

- {goal} converge DWH and big data workloads [1]

- the query engine scales-out for relational data and heterogeneous datasets stored in DFSs[1]

- needs a clean abstraction over the underlying data type and format, capturing just what’s needed for efficiently parallelizing data processing

- {goal} separate compute and state for cloud-native execution [1]

- all services within a pool are stateless

- data is stored durably in remote storage and is abstracted via data cells [1]

- ⇐ data is naturally decoupled from compute nodes

- the metadata and transactional log state is off-loaded to centralized services [[1]

- multiple compute pools can transactionally access the same logical database [1]

- {goal} cloud-first [2]

- {benefit} leverages elasticity

- transactions need to be resilient to node failures on dynamically changing topologies [2]

- ⇒ the storage engine disaggregates the source of truth for execution state (including data, metadata and transactional state) from compute nodes [2]

- must ensure disaggregation of metadata and transactional state from compute nodes [2]

- ⇐ to ensure that the life span of a transaction is resilient to changes in the backend compute topology [2]

- ⇐ can change dynamically to take advantage of the elastic nature of the cloud or to handle node failures [2]

- {goal} use optimized native columnar, immutable and open storage format [2]

- uses delta format

- ⇐ optimized to handle read-heavy workloads with low contention [2]

- {goal} leverage the full potential of vectorized query processing for SQL [2]

- {goal} support zero-copy data sharing with other services in the lake [2]

- {goal} support read-heavy workloads with low contention [2]

- {goal} support lineage-based features [2]

- by taking advantage of delta table capabilities

- {goal} provide full SQL SI transactional support [2]

- {benefit} all traditional DWH requirements are met [2]

- incl. multi-table and multi-statement transactions [2]

- ⇐ Polaris is the only system that supports this [2]

- the design is optimized for analytics, specifically read- and insert-intensive workloads [2]

- mixes of transactions are supported as well

- {objective} no cross-component state sharing [2]

- {principle} encapsulation of state within each component to avoid sharing state across nodes [2]

- SI and the isolation of state across components allows to execute transactions as if they were queries [2]

- ⇒ makes read and write transactions indistinguishable [2]

- ⇒ allows to fully leverage its optimized distributed execution framework [2]

- {objective} support snapshot Isolation (SI) semantics [2]

- implemented over versioned data

- allows reads (R) and writes (W) to proceed concurrently over their own data snapshot

- R/W never conflict, and W/W of active transactions only conflict if they modify the same data [2]

- ⇐ all W transactions are serializable, leading to a serial schedule in increasing order of log record IDs [4]

- follows from the commit protocol for write transactions, where only one transaction can write the record with each record ID [4]

- ⇐ R transactions at the snapshot isolation level create no contention

- ⇒ any number of R transactions can run concurrently [4]

- the immutable data representation in LSTs allows dealing with failures by simply discarding data and metadata files that represent uncommitted changes [2]

- similar to how temporary tables are discarded during query processing failures [2]

- {feature} resize live workloads [1]

- scales resources with the workloads automatically

- {feature} deliver predictable performance at scale [1]

- scales computational resources based on workloads' needs

- {feature} efficiently handle both relational and unstructured data [1]

- {feature} flexible, fine-grained task monitoring

- a task is the finest grain of execution

- {feature} global resource-aware scheduling

- enables much better resource utilization and concurrency than traditional DWHs

- capable of handling partial query restarts

- maintains a global view of multiple queries

- it is planned to build on this a global view with autonomous workload management features

- {feature} multi-layered data caching model

- leverages

- SQL Server buffer pools for cashing columnar data

- SSD caching

- the delta table and its log are are immutable, they can be safely cached on cluster nodes [4]

- {feature} tracks data lineage natively

- the transaction log can also be used to audit logging based on the commit Info records [4]

- {feature} versioning

- maintain all versions as data is updated [1]

- {feature} time-travel

- {benefit} allows users query point-in-time snapshots

- {benefit)} allows to roll back erroneous updates to the data.

- {feature} table cloning

- {benefit} allows to create a point-in-time snapshot of the data based on its metadata

- {concept} state

- allows to drive the end-to-end life cycle of a SQL statement with transactional guarantees and top tier performance [1]

- comprised of

- cache

- metadata

- transaction logs

- data

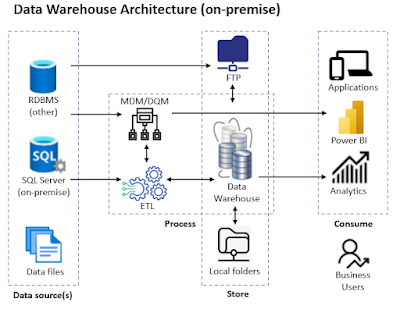

- [on-premises architecture] all state is in the compute layer

- relies on small, highly stable and homogenous clusters with dedicated hardware for Tier-1 performance

- {downside} expensive

- {downside} hard to maintain

- {downside} limited scalability

- cluster capacity is bounded by machine sizes because of the fixed topology

- {concept}[stateful architecture]

- the state of inflight transactions is stored in the compute node and is not hardened into persistent storage until the transaction commits [1]

- ⇒ when a compute node fails, the state of non-committed transactions is lost [1]

- ⇒ the in-flight transactions fail as well [1]

- often also couples metadata describing data distributions and mappings to compute nodes [1]

- ⇒ a compute node effectively owns responsibility for processing a subset of the data [1]

- its ownership cannot be transferred without a cluster restart [1]

- {downside} resilience to compute node failure and elastic assignment of data to compute are not possible [1]

- {concept} stateless compute architecture

- requires that compute nodes hold no state information [1]

- ⇒ all data, transactional logs and metadata need to be externalized [1]

- {benefit} allows applications to

- partially restart the execution of queries in the event of compute node failures [1]

- adapt to online changes of the cluster topology without failing in-flight transactions [1]

- caches need to be as close to the compute as possible [1]

- since they can be lazily reconstructed from persisted data they don’t necessarily need to be decoupled from compute [1]

- the coupling of caches and compute does not make the architecture stateful [1]

- {concept} [cloud] decoupling of compute and storage

- provides more flexible resource scaling

- the 2 layers can scale up and down independently adapting to user needs [1]

- customers pay for the compute needed to query a working subset of the data [1]

- is not the same as decoupling compute and state [1]

- if any of the remaining state held in compute cannot be reconstructed from external services, then compute remains stateful [1]

References:

[1] Josep Aguilar-Saborit et al (2020) POLARIS: The Distributed SQL Engine in Azure Synapse, Proceedings of the VLDB Endowment PVLDB 13(12) (link)

[2] Josep Aguilar-Saborit et al (2024), Extending Polaris to Support Transactions (link)

[3] Advancing Analytics (2021) Azure Synapse Analytics - Polaris Whitepaper Deep-Dive (link)

[4] Michael Armbrust et al (2020) Delta Lake: High-Performance ACID Table Storage over Cloud Object Stores, Proceedings of the VLDB Endowment 13(12) (link)

R/W - read/write