|

| Business Intelligence Suite |

I tend to agree that one person can't do anymore "everything in the data space", as Christopher Laubenthal put it his article on the topic [1]. He seems to catch the essence of some of the core data roles found in organizations. Summarizing these roles, data architecture is about designing and building a data infrastructure, data engineering is about moving data, database administration is mainly about managing databases, data analysis is about assisting the business with data and reports, information design is about telling stories, while data science can be about studying the impact of various components on the data.

However, I find his analogy between a college's functional structure and the core data roles as poorly chosen from multiple perspectives, even if both are about building an infrastructure of some type.

Firstly, the two constructions have different foundations. Data exists in a an organization also without data architects, data engineers or data administrators (DBAs)! It's enough to buy one or more information systems functioning as islands and reporting needs will arise. The need for a data architect might come when the systems need to be integrated or maybe when a data warehouse needs to be build, though many organizations are still in business without such constructs. While for the others, the more complex the integrations, the bigger the need for a Data Architect. Conversely, some systems can be integrated by design and such capabilities might drive their selection.

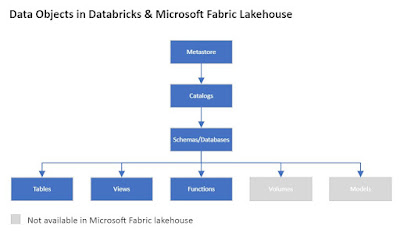

Data engineering is needed mainly in the context of the cloud, respectively of data lake-based architectures, where data needs to be moved, processed and prepared for consumption. Conversely, architectures like Microsoft Fabric minimize data movement, the focus being on data processing, the successive transformations it needs to suffer in moving from bronze to the gold layer, respectively in creating an organizational semantical data model. The complexity of the data processing is dependent on data' structuredness, quality and other data characteristics.

As I mentioned before, modern databases, including the ones in the cloud, reduce the need for DBAs to a considerable degree. Unless the volume of work is big enough to consider a DBA role as an in-house resource, organizations will more likely consider involving a service provider and a contingent to cover the needs.

Having in-house one or more people acting under the Data Analyst role, people who know and understand the business, respectively the data tools used in the process, can go a long way. Moreover, it's helpful to have an evangelist-like resource in house, a person who is able to raise awareness and knowhow, help diffuse knowledge about tools, techniques, data, results, best practices, respectively act as a mentor for the Data Analyst citizens. From my point of view, these are the people who form the data-related backbone (foundation) of an organization and this is the minimum of what an organization should have!

Once this established, one can build data warehouses, data integrations and other support architectures, respectively think about BI and Data strategy, Data Governance, etc. Of course, having a Chief Data Officer and a Data Strategy in place can bring more structure in handling the topics at the various levels - strategical, tactical, respectively operational. In constructions one starts with a blueprint and a data strategy can have the same effect, if one knows how to write it and implement it accordingly. However, the strategy is just a tool, while the data-knowledgeable workers are the foundation on which organizations should build upon!

"Build it and they will come" philosophy can work as well, though without knowledgeable and inquisitive people the philosophy has high chances to fail.

Previous Post <<||>> Next Post

Resources:

[1] Christopher Laubenthal (2024) "Why One Person Can’t Do Everything In Data" (link)