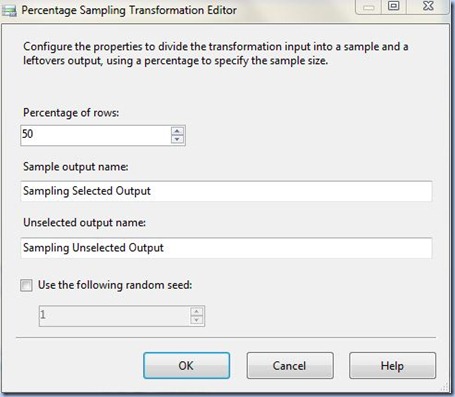

Using the template SSIS package defined in Third Magic Class post, copy paste the Package.dtsx in the project and rename it (e.g. Package Percentage Sampling.dtsx), and from Toolbox add an Percentage Sampling Transformation and link it to the OLE DB Source. Access the Percentage Sampling Editor in which modify the Percentage of rows value from 10 to 50. It doesn’t really makes sense to rename the sample and unselected outputs, though you might need to do that when dealing with multiple Percentage Sampling Transformations.

Note:

The percentage of rows you’d like to work with depends entirely upon request, in many cases it’s indicated to determine statistically the size of your sample. Given the fact that the number of records in this example is quite small I preferred to use a medium dataset size.

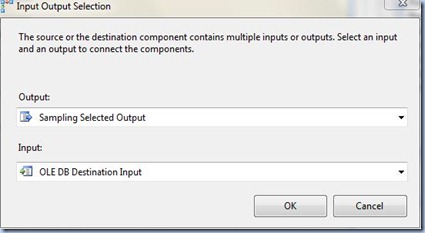

Link the Aggregate Transformation to the OLE DB Destination and in the Input Output Selection dialog select as Output the ‘Sampling Selected Output’, while in the OLE DB Destination Editor create a new table (e.g. Production.BikesSample).

In the last step, before testing the whole package, in Control Flow tab change the Execute SQL Task’s SQLStatement property to point to the current destination table:

TRUNCATE TABLE [Production].[BikesSample]

Save the project, test (debug) the package (twice) and don’t forget to validate the output data:

SELECT * FROM [Production].[BikesSample]

Note:

I was actually expecting to have 48 or 49 records (97:2=48.5) in the output and not 45, I wonder from where comes the difference?! That’s a topic I still have to investigate. I tried also to change the percentage of rows to 25 resulting an output of 23 of records (23*4=92), 75 resulting an output of 74 records, respectively 100, all the records being this time selected. At least the algorithm used by Microsoft partitions the output in complementary datasets.

No comments:

Post a Comment